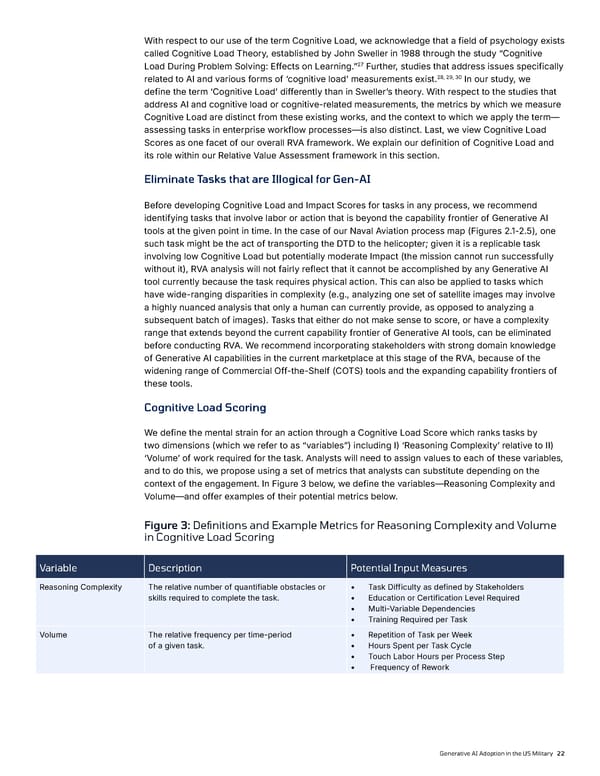

22 With respect to our use of the term Cognitive Load, we acknowledge that a field of psychology exists called Cognitive Load Theory, established by John Sweller in 1988 through the study “Cognitive Load During Problem Solving: Effects on Learning.”27 Further, studies that address issues specifically related to AI and various forms of ‘cognitive load’ measurements exist.28, 29, 30 In our study, we define the term ‘Cognitive Load’ differently than in Sweller’s theory. With respect to the studies that address AI and cognitive load or cognitive-related measurements, the metrics by which we measure Cognitive Load are distinct from these existing works, and the context to which we apply the term— assessing tasks in enterprise workflow processes—is also distinct. Last, we view Cognitive Load Scores as one facet of our overall RVA framework. We explain our definition of Cognitive Load and its role within our Relative Value Assessment framework in this section. Eliminate Tasks that are Illogical for Gen-AI Before developing Cognitive Load and Impact Scores for tasks in any process, we recommend identifying tasks that involve labor or action that is beyond the capability frontier of Generative AI tools at the given point in time. In the case of our Naval Aviation process map (Figures 2.1-2.5), one such task might be the act of transporting the DTD to the helicopter; given it is a replicable task involving low Cognitive Load but potentially moderate Impact (the mission cannot run successfully without it), RVA analysis will not fairly reflect that it cannot be accomplished by any Generative AI tool currently because the task requires physical action. This can also be applied to tasks which have wide-ranging disparities in complexity (e.g., analyzing one set of satellite images may involve a highly nuanced analysis that only a human can currently provide, as opposed to analyzing a subsequent batch of images). Tasks that either do not make sense to score, or have a complexity range that extends beyond the current capability frontier of Generative AI tools, can be eliminated before conducting RVA. We recommend incorporating stakeholders with strong domain knowledge of Generative AI capabilities in the current marketplace at this stage of the RVA, because of the widening range of Commercial Off-the-Shelf (COTS) tools and the expanding capability frontiers of these tools. Cognitive Load Scoring We define the mental strain for an action through a Cognitive Load Score which ranks tasks by two dimensions (which we refer to as “variables”) including I) ‘Reasoning Complexity’ relative to II) ‘Volume’ of work required for the task. Analysts will need to assign values to each of these variables, and to do this, we propose using a set of metrics that analysts can substitute depending on the context of the engagement. In Figure 3 below, we define the variables—Reasoning Complexity and Volume—and offer examples of their potential metrics below. Figure 3: Definitions and Example Metrics for Reasoning Complexity and Volume in Cognitive Load Scoring Variable Description Potential Input Measures Reasoning Complexity The relative number of quantifiable obstacles or skills required to complete the task. • Task Difficulty as defined by Stakeholders • Education or Certification Level Required • Multi-Variable Dependencies • Training Required per Task Volume The relative frequency per time-period of a given task. • Repetition of Task per Week • Hours Spent per Task Cycle • Touch Labor Hours per Process Step • Frequency of Rework Generative AI Adoption in the US Military

Generative AI Adoption in the US Military Page 21 Page 23

Generative AI Adoption in the US Military Page 21 Page 23